Delving into Self-Driving Car Accident Lawyer: Liability When Software and AI Fail, this introduction immerses readers in a unique and compelling narrative, with a focus on the legal complexities and ethical considerations surrounding accidents involving autonomous vehicles.

Exploring the nuances of liability when software and AI fail in self-driving cars opens up a world of intricate legal frameworks, key factors influencing liability, and intriguing case studies that shed light on this evolving area of law.

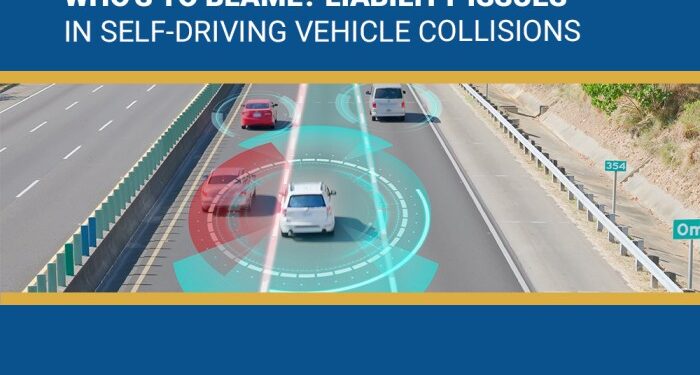

Liability in Self-Driving Car Accidents

When it comes to self-driving car accidents, determining liability can be a complex issue. In traditional car accidents, human drivers are typically held responsible for any negligence or errors that lead to a collision. However, in the case of autonomous vehicles, where software and AI are in control, the concept of liability shifts.

Liability Determination When AI and Software Fail

In situations where the software or AI of a self-driving car fails and causes an accident, liability may not rest solely on the human occupant or owner of the vehicle. Instead, the manufacturer of the autonomous vehicle or the developers of the technology could be held liable for any malfunctions or defects that contributed to the crash.

This raises questions about whether the responsibility lies with the human operator, the technology creators, or a combination of both.

Legal Framework for Self-Driving Car Accident Liability

The legal framework surrounding liability for self-driving car accidents is still evolving as this technology becomes more prevalent on the roads. In many jurisdictions, laws are being updated to address the unique challenges posed by autonomous vehicles. Some key considerations include whether traditional liability laws need to be adapted to reflect the new reality of AI-controlled vehicles, how to determine fault in accidents involving both autonomous and human-driven vehicles, and the role of insurance companies in covering damages in these cases.

Factors Influencing Liability

When it comes to determining liability in self-driving car accidents where software and AI fail, several key factors come into play. These factors involve the manufacturers, software developers, car owners, and insurance companies.

Role of Manufacturers

Manufacturers play a crucial role in determining liability in self-driving car accidents. They are responsible for designing and manufacturing the vehicle, including the software and AI systems that control it. If a defect in the software or AI system leads to an accident, the manufacturer may be held liable for damages.

Role of Software Developers

Software developers are also key players in the liability chain. They are responsible for creating and updating the algorithms that power the self-driving car’s decision-making processes. If a flaw in the software causes an accident, the developers may share liability with the manufacturers.

Role of Car Owners

Car owners have a responsibility to properly maintain and update the self-driving car’s software and AI systems. Failure to do so could result in accidents for which the owner may be held liable. Additionally, car owners may also be responsible for overseeing the vehicle’s operations and ensuring it is safe to use on the road.

Insurance Company Handling

Insurance companies play a critical role in handling liability claims in self-driving car accidents. They must assess the circumstances of the accident, including the role of software and AI failure, to determine fault and compensation. Insurance policies for self-driving cars may need to be updated to address these emerging issues and allocate liability appropriately.

Legal Precedents and Case Studies

Legal precedents and case studies play a crucial role in shaping liability laws for self-driving car accidents. They provide insights into how courts have ruled in cases where software and AI failures led to liability issues, setting the stage for future incidents.

Landmark Cases in Self-Driving Car Accidents

- In the case of Uber’s self-driving car accident in Tempe, Arizona in 2018, where a pedestrian was struck and killed, the investigation revealed that the vehicle’s software failed to properly identify the pedestrian. This case raised questions about the liability of the technology company versus the vehicle operator.

- The Tesla Autopilot crash in 2016, where a Model S collided with a tractor-trailer, resulted in the first known death involving a self-driving car. The investigation highlighted the limitations of the Autopilot system and raised concerns about the expectations versus the capabilities of autonomous vehicles.

- Waymo v. Uber, a high-profile legal battle over trade secrets and intellectual property related to self-driving technology, shed light on the complexities of liability issues in the autonomous vehicle industry. The case emphasized the importance of safeguarding proprietary technology to prevent accidents and legal disputes.

Ethical Considerations

The introduction of self-driving cars has brought about a myriad of ethical considerations, particularly when it comes to liability in cases where the software and AI fail. These considerations delve into the complex intersection of technology, morality, and accountability.

Ethical Dilemmas

- Regulators: Regulators are faced with the dilemma of setting standards and regulations for self-driving cars that balance innovation with safety. They must consider how to hold companies accountable for accidents caused by software failures without stifling technological advancements.

- Manufacturers: Manufacturers of autonomous vehicles are tasked with creating systems that prioritize safety above all else. They must grapple with decisions regarding the level of autonomy, risk assessment, and transparency in disclosing potential software vulnerabilities.

- Consumers: Consumers using self-driving cars face ethical dilemmas related to trust in the technology. They must decide whether to place their faith in AI systems to make split-second decisions that could impact their safety and the safety of others on the road.

Application of Ethical Frameworks

Ethical frameworks provide a structured approach to navigating liability challenges in autonomous vehicles. By applying principles such as utilitarianism, deontology, and virtue ethics, stakeholders can evaluate the ethical implications of their decisions and actions in the context of self-driving car accidents.

These frameworks offer guidance on how to prioritize safety, accountability, and transparency in the development and deployment of autonomous vehicles.

Closing Summary

In conclusion, the discussion on Self-Driving Car Accident Lawyer: Liability When Software and AI Fail illuminates the intricate web of legal, ethical, and technological challenges that come into play when accidents involving autonomous vehicles occur. As the technology continues to advance, the need for clear liability guidelines becomes increasingly crucial for all stakeholders involved.

FAQs

What factors influence liability in self-driving car accidents?

Key factors include the role of manufacturers, software developers, and car owners, as well as how insurance companies handle claims.

Are there any landmark cases that have shaped liability laws for autonomous vehicles?

Yes, there have been important legal precedents and case studies that have influenced liability laws for self-driving car accidents.

How do ethical considerations play a role in determining liability when software and AI fail in self-driving car accidents?

Ethical frameworks are crucial in navigating liability challenges, as they help address dilemmas faced by regulators, manufacturers, and consumers.